AI? Meet Academia…

tudents in Josh de Leeuw’s Introduction to Cognitive Science class spend their first assignment sparring about the mind’s complexities with Pat, an artificial intelligence chatbot he created. Pat asks: “Can the richness of our mental experiences be reduced to the firing of neurons?”

From there, students engage with Pat in a back-and-forth dialogue designed to encourage critical thinking and assess their grasp of the course’s early philosophical concepts. It’s a modern version of an oral exam. De Leeuw ’08, an Associate Professor of Cognitive Science at Vassar, trained Pat with course materials. “The AI tools already out there just help students [do things],” he says. “ChatGPT won’t come back with questions.” Chat with Pat encourages students to think more deeply about alternative perspectives. The focus shifts to broadening one’s understanding of a topic, rather than merely using AI as a shortcut to answers.

Students submit two reflective paragraphs about their experience, plus their Chat with Pat transcript, allowing de Leeuw to review their insights and how they interacted with the AI. He hopes assignments like these will help to guide Vassar students’ use of AI—both on campus and beyond.

De Leeuw is one of a growing number of professors actively grappling with AI’s place in higher education and doing what they can to ensure it’s integrated into campuses in an ethical and safe manner.

It’s important “to teach students to use AI as a tool to invest in their learning,” de Leeuw says. “What I hope is that by getting something like the Chat with Pat assignment early on in their college education, students can see there’s another way you can use these tools other than just asking it to do things for you.”

AI as a supplement, not a substitute

Since OpenAI released ChatGPT in 2022, college students have rapidly embraced AI to outline essays, generate practice questions, and even complete entire assignments. Some 86 percent of students globally regularly use AI in their studies, according to a Digital Education Council Global AI Student Survey. At Vassar from 2024 to 2025, the number of first-year students reporting experience with AI and related technologies increased roughly fourfold, according to Vassar’s Chief Information Officer Carlos Garcia.

On college and university campuses—where deep learning, scholarship, and personal growth are hallmarks—administrators and faculty walk a fine line: embracing technological progress without compromising critical thinking or creativity. One recent study from MIT’s Media Lab found that participants who wrote SAT essays with ChatGPT showed the weakest neural activity when compared with students who used a traditional search engine or no tool at all. As they wrote subsequent essays, students also fell behind in their ability to quote from the paper they had just written, and they began to mostly copy and paste.

“AI is looking like it might be a bigger disruption to higher education than the pandemic was,” says Assistant Professor of Computer Science Jonathan Gordon ’07. “We’re looking at a long-term change that’s making us rethink all of our classes.”

Vassar professors recently mulled over AI’s role in teaching and learning at a Vassar Pedagogy in Action workshop—“A Beginner’s Guide to Assembling Your AI Toolkit.” One idea was to monitor how Vassar students are using AI by performing an annual baseline study. “Right now we’re making a lot of assumptions, some true, some not,” says participant and Professor and Chair of History on the Marion Musser Lloyd ’32 Chair Ismail Rashid.

“I wanted to introduce it in a transparent, and not accusatory, way so students know we’re aware AI could be useful,” says Rashid. “AI is going to be another tool that will help students not only acquire but also process information.” He believes that AI will be transformational for accelerating students’ understanding of existing research. “It’s time they can dedicate to furthering their projects or their research studies,” he says.

Other Vassar professors are using AI to crunch numbers, code, and identify readings for class. De Leeuw uses it to gain a deeper understanding of new mathematical concepts.

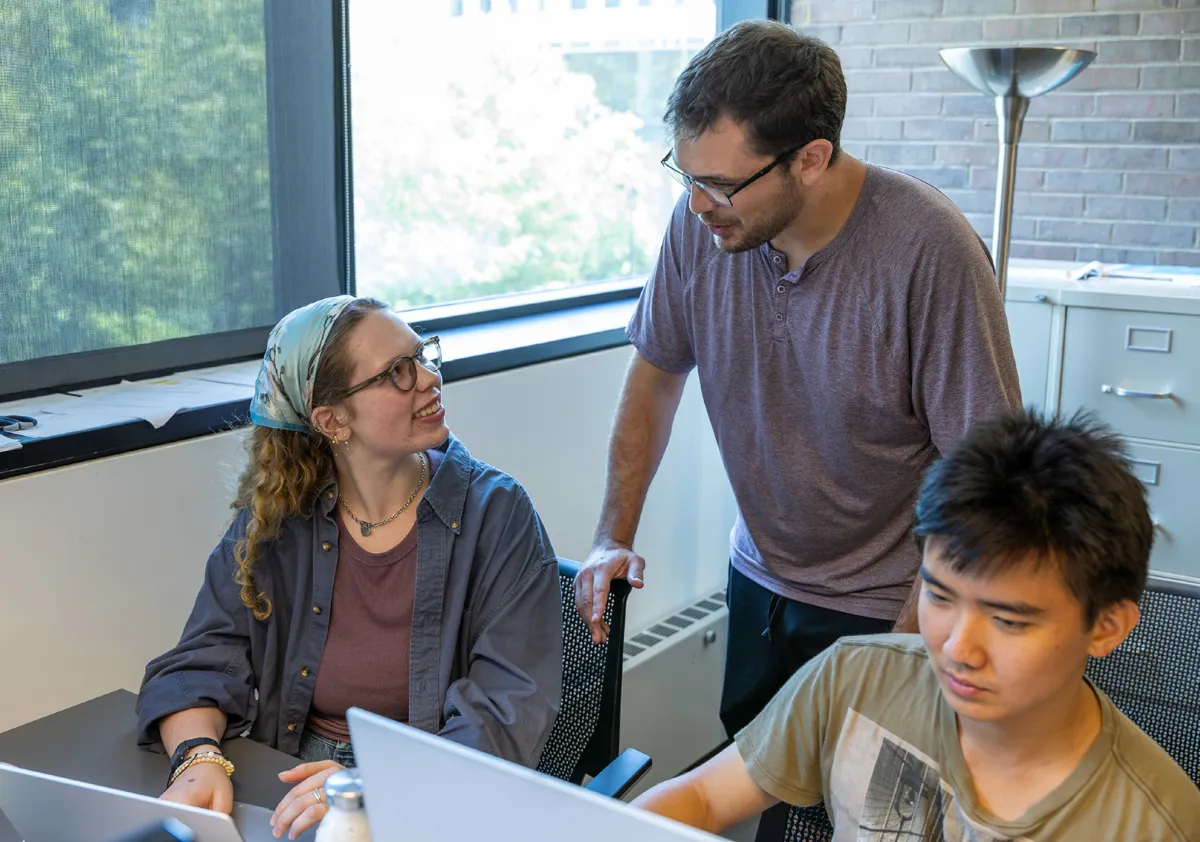

Last summer, as part of an Undergraduate Research Summer Institute (URSI) project, he and two Vassar students ran a study that assessed whether initially engaging with a chatbot on a challenging topic increases a person’s willingness to interact with people who hold opposing views. Results are forthcoming. But the work has implications for how AI is used in the classroom. De Leeuw theorizes that engaging first with AI may help students with minority views to feel more comfortable speaking up in class.

A key question, says de Leeuw, is “Are we maximizing our agency?” Is AI helping students and faculty to accomplish their goals through co-creating, or is it minimizing people’s agency by offloading tasks to AI systems? “Higher ed is still fundamentally about human relationships, building connections,” de Leeuw says; there’s still value in the human brain, in how humans think about things.

“One of the goals in higher education should be, how do we expose students to positive use cases and teach skills needed to work with these systems and evaluate them,” says de Leeuw, “to be critical of what these systems are doing, and how what they’re doing might be impacting our thoughts and agency.” Faculty will have to weave these lessons into coursework. One possibility de Leeuw poses is for Vassar to offer a foundational workshop during orientation for first-year students on the positive and negative uses of AI.

Prioritizing the process over the final product

John Long, Professor of Biology and Professor and Chair of Cognitive Science on the John Guy Vassar Chair, is intentional about differentiating helpful AI uses from potentially hurtful ones with his students. In his Introduction to Cognitive Science class, one assignment is particularly tempting to outsource to AI: critiquing and then rewriting a science news article based on a research study. During office hours, Long explains the importance of writing and self-editing—without AI.

“It’s not about the final product, it’s about the process,” he says. “We talk about how, when you don’t do your own editing, when you let ChatGPT do your editing, you’re missing out on this skill that helps you evaluate the quality and veracity of any piece of writing—including your own.” Increasingly, as AI becomes more prevalent in classrooms, professors will need to be even more intentional about helping students to hone these types of life skills—critical reading and thinking; wise, fact-based decision-making; accepting accountability; and effectively working with people from a variety of backgrounds.

“The goal should be learning and growing as a person, not getting an A,” adds Gordon. “It’s about what you get out of class that changes you as a person, or the way you think about the world—that’s the valuable part, that’s what we should be optimizing for.”

Gordon is leaning toward letting students in his 300-level Natural Language Processing project-based class use AI—with a caveat: They’ll have to meet with him one-on-one to talk him through the project after completion, and that will figure into their grade. “Can they defend the claims in their report?” he says. In the entry-level course Programming with Data, to be taught this fall, he and other professors will spearhead discussions about the ethics surrounding the use of people’s data to train AI, AI-related job losses, and AI’s climate impact.

“If students are thinking about this in 100-level classes, then maybe they do use AI more thoughtfully in those 200 and 300 classes,” he says. He prefers these types of proactive approaches to guiding students on AI rather than punitive ones. “AI detection tools don’t work that reliably,” Gordon says. “They just turn people from cheaters into people who are working to hide their cheating, and that’s even worse than just the cheating.”

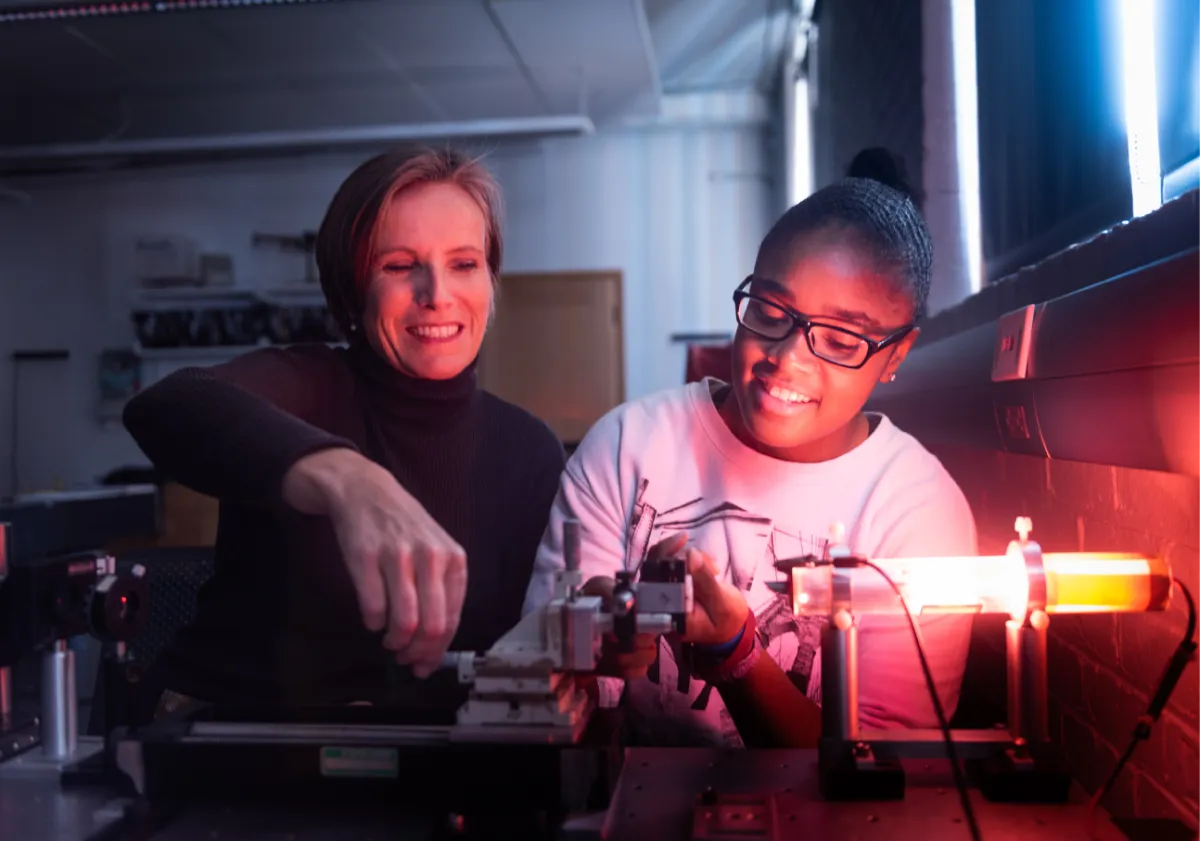

Professor of Physics Jenny Magnes is testing to see whether AI can solve homework problems issued in her Electromagnetism course. She plans to use AI’s mistakes as teachable moments to help students critically analyze AI output and reinforce key concepts. She found that some of AI’s answers referenced mathematical notations not used in the class textbook, such as the Einstein summation notation. Magnes asked AI to create and format a reference document teaching Einstein notation, saving her valuable time.

Still, she and other faculty members continue to grapple with where to draw the line on how students use AI in and for class.

The return of blue books?

Gordon’s approach to AI in his classes has been to ask students who’ve used AI to complete an assignment to provide a brief written statement on how, or to show him their full chatbot transcript. “We need to know, where are you in the work?” he says.

Many of his students ask AI questions like, “I’m stuck with this, can you help?” Gordon says. “Things they would come to office hours for. Now, they’re getting office hours at 3:00 a.m. I don’t offer that—fine.” Last year, however, one student’s transcript began with the query “I have a project due in five days,” and then AI ended up doing the work.

“That was not a project that turned out very well,” Gordon says. “There was a disturbing lack of human interaction. That was not an ideal way to use AI.”

To safeguard against problematic usage, Vassar professors are considering reviving ways of evaluating students that have been less common in recent years—from handwritten blue-book exams to oral evaluations—or giving more weight to in-person, interactive aspects of pedagogy, such as class participation. “These are things we’re definitely talking about,” says Gordon. And it’s a trend that’s building at colleges and universities nationwide.

The Wall Street Journal reported that blue-book sales increased by almost 50 percent at the University of Florida and by 80 percent at the University of California, Berkeley, over the last two years. Gordon will add an in-person midterm exam for the first time ever to his Natural Language Processing course this fall. In the age of AI, “I just don’t trust that homework assignments are an accurate way to assess what students know,” he says. John Long says his Introduction to Biology quizzes are in person and on paper, too.

Still, Gordon says, colleges and universities must be mindful of the reasons alternative assignments became popular in the first place: for example, to prevent panic attacks during test taking, or to accommodate students who are shy about speaking up in class. “This is where we need to be careful,” says Gordon. “There’s definitely that trade-off.”

Professor of Cognitive Science Ken Livingston worries that relying too much on face-to-face assessment could come at the expense of students barely writing at all, and becoming less capable of refining and evolving their thinking.

“There are ways of thinking that are hard to master if you don’t learn how to write,” he says. “Losing our ability to think in complex, deep ways, that would be a real hit to the culture, to human beings, and to our ability to do deep work and even to enjoy our lives in a deep and profound way.”

“It’s a delicate balance,” he adds. Professors have to get more savvy at AI so they can teach students to use systems and, ultimately, partner with the technology in the workplace. But students also need support in preserving and refining skills that will be more important as AI evolves, including deep thinking.

Lately, more of Livingston’s assignments require students to be in class, in person to collect data, write reports, and draw and label sketches. For the six pieces of writing he assigns during his first-year writing seminar, he meets in person with every student during the drafting stage to ensure they’re grasping the material and not outsourcing to AI.

“And I do spend a lot of time talking with students and thinking about the long term, not just the short term,” he says, “about what kind of mind you want to have when you leave [Vassar], and what you need to do to achieve that goal. Some of it is trying to provide that larger context, and then hope students have their own self-interest at heart.”

AI and the enduring values of the liberal arts

As AI grows in sophistication, colleges are working faster to establish clear guardrails and guidance around teaching and learning in the age of AI that position them as leaders—promoting responsible AI use, defining and discouraging misuse, and shaping how students engage with the technology. At Vassar, ensuring safe AI that doesn’t expose students’ and faculty’s information to the outside world is a core concern for the college’s AI working group, comprising administrators and several dozen professors across disciplines.

Alongside security and data privacy concerns, AI raises other important concerns, like its impact on the climate, says Vassar’s CIO Carlos Garcia. “We also need to be facilitating discourses on campus about the ripple effects of issues associated with AI,” he says. “Given the inevitably of this technology to transform the way that humans learn and work, we’ve got to grapple with those issues and prepare students for engaging in problem-solving when they leave.”

The working group is beta testing solutions like ChatGPT, Claude, and Perplexity to see which could be the best fit for most Vassar students and faculty—if the college pursues a license agreement with a major provider. That’s been the path other universities have forged, including Duke, which began offering unlimited ChatGPT access to students, faculty, and staff in June. Other universities, such as the University of Michigan, have developed their own homegrown generative AI tools.

“There’s an incredible push in academics to pick one type of AI and subscribe to a campus-wide license and give it to everyone,” says Academic Computing Consultant for the Sciences and Laybourne Visualization Laboratory Manager Susannah Zhang, who’s leading the working group. “We don’t want to jump into that, and then find it doesn’t meet the needs of most departments on campus.”

Making these types of broad decisions about AI usage for faculty, staff, and students is especially challenging given the current dearth of government regulations around the industry, adds Garcia. “Helping to set standards and best practices then becomes an opportunity for [Vassar] to lead if we position ourselves right,” he says.

Any AI will have its limitations, Zhang notes, and Vassar will have to clearly outline those for students and faculty—creating an AI best practices guide is on the group’s to-do list. But, no matter the platform, “Students have to continue to build their own foundational knowledge on a topic,” Zhang says. “AI is not a substitute for learning the subject matter.”

Long contends that integrating AI into higher education should be grounded in the core values of a liberal arts education. “Now is actually the time to double down on liberal arts, which has always been about critical thinking, a community of scholars, honesty, transparency, and responsibility,” he says.

Gordon wants those in academia to fight to maintain humanity. “I want our graduating seniors to know how to use AI effectively, in ways that make them more capable and more powerful as researchers and as people who are doing data analysis. It’s okay to have the computer as a collaborator. It’s not okay to replace yourself with a computer.”

D. Graham Burnett, who teaches the history of science at Princeton, and will present as part of a Signature Program at The Vassar Institute for the Liberal Arts this fall, recently penned the essay “Will the Humanities Survive Artificial Intelligence?” for The New Yorker. In it, he argued that AI systems allow us to return to the reinvention of the humanities, of humanistic education itself. “But to be human is not to have answers,” he writes. “It is to have questions—and to live with them. The machines can’t do that for us. Not now, not ever.”

“But,” he suggests, “these systems have the power to return us to ourselves in new ways.”